Sorting out your inspection problems

Optiserve is specialized in optical sorting solutions for the food industry. Aside from refurbishing optical sorting machines, they also developed a state-of-the-art sorting machine in-house, building upon knowledge and experience gained by refurbishing a wide range of sorting machines.

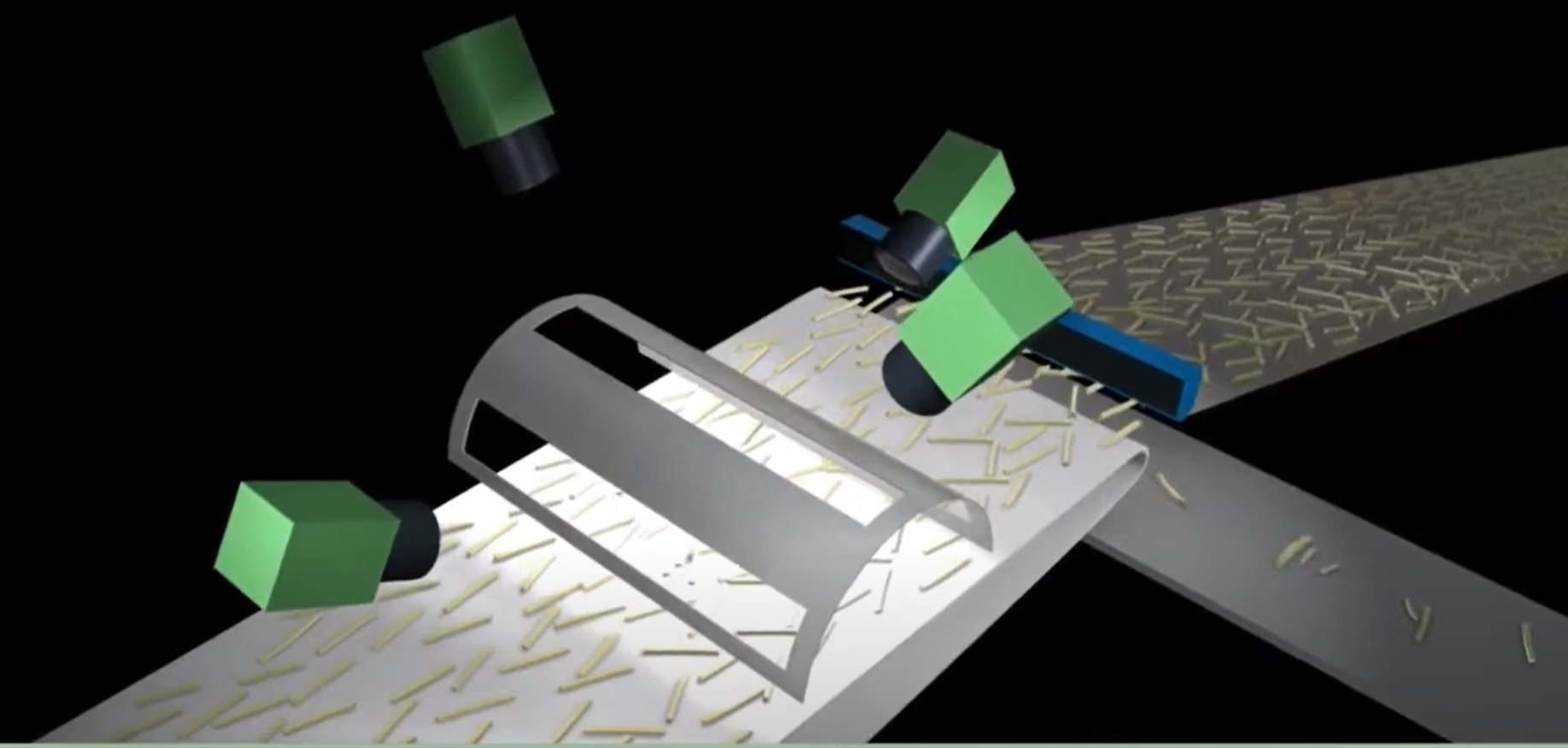

VI Technologies was tasked with developing the machine vision software and the machine control hardware and software. Using NI hardware and software we were able to connect all these parts into one platform. The first phase of the development started with sorting french fries by length and defects. However, by building the machine vision software modularly, the sorting applications could quickly be expanded to potato cubes, potato wedges, and even carrots and apples.

This application required a lot of different fields of expertise ranging from machine vision to FPGA programming. One of the key features for this application is the high belt speed. By using a cRIO with an onboard FPGA, data can be quickly processed, ensuring the control of time-critical components like the eject valves. In order to align the camera acquisition with the belt position, a belt encoder is also read out by the FPGA and translated into coordinates for the machine vision software.

Using line scan cameras and NI frame grabbers, the camera acquisition could be linked to the belt position encoder. After the acquisition of the images, the images will be corrected for lens and perspective distortion and lighting variations. This allows for the images to be compared to each other, increasing the overall accuracy of the system. Once analysis has been done on the images, individual products are labeled and potentially flagged for removal. The coordinates of these products are sent back to the FPGA, which will open the valves at the right time and position.

Using line scan cameras and NI frame grabbers, the camera acquisition could be linked to the belt position encoder. After the acquisition of the images, the images will be corrected for lens and perspective distortion and lighting variations. This allows for the images to be compared to each other, increasing the overall accuracy of the system. Once analysis has been done on the images, individual products are labeled and potentially flagged for removal. The coordinates of these products are sent back to the FPGA, which will open the valves at the right time and position.

Finally, an extensive User Interface needed to be programmed. All the underlying calculations needed to be simplified in an easy-to-use UI that is displayed on a touch screen. On the UI an operator can change sorting criteria, control the machine, and view sorting statistics. Statistics are also shared on a webservice and OPC UA server for remote monitoring.